WebSocket vs Socket.IO in production: protocol, architecture, and real-world patterns

April 1, 2026

This article is intentionally WebSocket-first:

- WebSocket is the core realtime protocol.

- Socket.IO is a library + custom protocol for faster implementation.

- Critical production concerns: reconnect, congestion, backpressure, duplicate/order.

- Polling, SSE (Server-Sent Events), and WebSocket comparison for better architectural choices.

Technical terms are explained inline with tooltips, e.g. backpressure, jitter, and idempotency.

Abbreviation glossary

- HTTP (HyperText Transfer Protocol)

- API (Application Programming Interface)

- RFC (Request for Comments)

- TCP (Transmission Control Protocol)

- SSE (Server-Sent Events)

- WS (WebSocket)

- DX (Developer Experience)

- TTL (Time To Live)

- EIO (Engine.IO Protocol Version)

- JSON (JavaScript Object Notation)

- CDN (Content Delivery Network)

- LB (Load Balancer)

- IO (Input/Output)

- OOM (Out Of Memory, potentially followed by OOM Killer)

- OS (Operating System)

- UX (User Experience)

1. What is WebSocket?

WebSocket is a standard protocol defined by RFC (Request for Comments) 6455 for bidirectional (full-duplex) communication between client and server.

Basic flow:

- Client sends an HTTP (HyperText Transfer Protocol) Upgrade request.

- Server replies

101 Switching Protocols. - Connection stays long-lived.

- Both sides exchange realtime frames without new HTTP request/response cycles.

Handshake example:

GET /ws HTTP/1.1

Upgrade: websocket

Connection: Upgrade

Sec-WebSocket-Key: ...

Sec-WebSocket-Version: 13Mental model:

Client <-> Server (one long-lived connection).

Common confusion: WebSocket starts from HTTP, but after upgrade, semantics are no longer HTTP API (Application Programming Interface) semantics; you are operating a stateful bidirectional stream.

WEBSOCKET LIFECYCLE (UPGRADE -> DUPLEX -> CLOSE)

Handshake diễn ra trên HTTP, sau đó chuyển sang WebSocket frames.

Khi disconnect, client áp dụng reconnect strategy thay vì reconnect liên tục.

WEBSOCKET CHANNEL vs HTTP REQUEST/RESPONSE

WebSocket

Low-latency bidirectional frames on one long-lived connection.

HTTP

Each interaction re-pays request overhead and connection coordination.

1.1 WebSocket handshake internals: how does the browser know what to do?

A WebSocket handshake is a real HTTP request with upgrade headers that ask the server to switch protocols.

Browser request:

GET /ws HTTP/1.1

Host: realtime.example.com

Connection: Upgrade

Upgrade: websocket

Sec-WebSocket-Key: dGhlIHNhbXBsZSBub25jZQ==

Sec-WebSocket-Version: 13

Origin: https://app.example.comServer response when upgrade is accepted:

HTTP/1.1 101 Switching Protocols

Upgrade: websocket

Connection: Upgrade

Sec-WebSocket-Accept: s3pPLMBiTxaQ9kYGzzhZRbK+xOo=WEBSOCKET HANDSHAKE DEEP DIVE

Browser không tự suy đoán protocol. Nó chỉ chuyển sang WebSocket mode khi server trả đúng handshake response theo RFC 6455.

Important header roles:

Upgrade: websocket: asks for protocol switch.Connection: Upgrade: marks this as hop-by-hop upgrade metadata.Sec-WebSocket-Key: client nonce used in RFC validation.Sec-WebSocket-Accept: server proof that it understood WebSocket handshake.

After 101, the channel is no longer HTTP request/response semantics; it becomes a bidirectional WebSocket frame stream.

1.2 Why HTTP/1.1 is still common for WebSocket handshake (instead of HTTP/2)

The classic WebSocket upgrade mechanism is defined around HTTP/1.1.

- HTTP/1.1 directly supports

Connection: UpgradeandUpgrade: websocket. - HTTP/2 does not use hop-by-hop

Connectionsemantics in the same way. - WebSocket over HTTP/2 exists through extended CONNECT, but production support across proxies/CDNs (Content Delivery Networks)/LBs (Load Balancers) is still less uniform than HTTP/1.1 in many stacks.

So in real deployments, a common pattern is:

- Handshake through HTTP/1.1 for compatibility.

- Then keep one long-lived TCP (Transmission Control Protocol) socket for realtime frames.

1.3 Ping/Pong vs keep-alive: not the same thing

These are often mixed up:

- HTTP/TCP keep-alive: avoid premature transport-level close.

- WebSocket ping/pong: protocol-level heartbeat to detect zombie/half-open connections.

A practical policy:

- Server sends ping every 20-30s.

- Client must pong within timeout (e.g. 10s).

- Timeout triggers socket close + reconnect policy.

HEARTBEAT: PING/PONG + TIMEOUT DETECTION

Ping/Pong đo liveness ở tầng WebSocket protocol, không phải chỉ dựa vào TCP keep-alive.

Khi pong quá hạn, nên close chủ động và kích hoạt reconnect policy để tự phục hồi nhanh.

Key insight: transport keep-alive alone does not guarantee application health; ping/pong is what gives fast liveness detection and self-healing.

2. What is Socket.IO?

Socket.IO is a library + custom protocol (not raw WebSocket).

It provides practical abstractions:

- Event API (Application Programming Interface) (

emit/on). - auto reconnect.

- Rooms and namespaces.

- Auth middleware.

- Transport fallback.

Key point:

- Socket.IO prefers WebSocket.

- If upgrade fails, it can fall back to HTTP long polling.

SOCKET.IO TRANSPORT NEGOTIATION (WS FIRST, POLLING FALLBACK)

Socket.IO sẽ giữ app chạy ổn định bằng cách degrade transport thay vì hard-fail kết nối.

2.1 Socket.IO layers (what actually runs under the hood)

Socket.IO is not only emit/on; it is a layered stack:

[ Socket.IO protocol ]

↓

[ Engine.IO ]

↓

[ WebSocket or HTTP (polling) ]

↓

[ TCP (Transmission Control Protocol) ]Layer responsibilities:

- Socket.IO protocol: event packets, namespaces, ack semantics.

- Engine.IO: transport management, heartbeat, upgrade, reconnect behavior.

- Transport: WebSocket or HTTP long polling.

- TCP: byte transport.

SOCKET.IO STACK: PROTOCOL -> ENGINE -> TRANSPORT -> TCP

Packet ứng dụng đi qua nhiều tầng trước khi thành byte trên wire. Vì vậy debug realtime nên tách lỗi theo từng layer.

Raw WebSocket server chỉ hiểu payload tự định nghĩa; Socket.IO packet cần parser/protocol tương thích ở phía server.

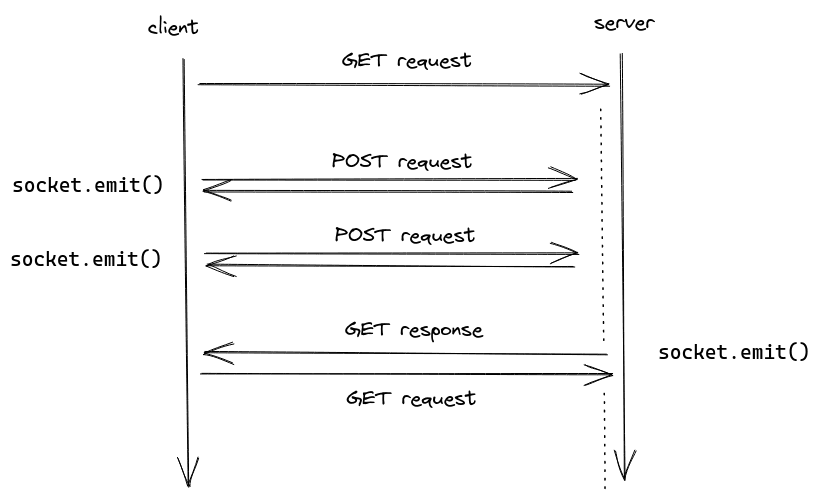

2.2 Actual Socket.IO connection flow

In many environments Socket.IO may not jump to WebSocket immediately; it can start from polling for baseline connectivity.

- Start with polling (real HTTP):

GET /socket.io/?EIO=4&transport=pollingEIO=4 means Engine.IO Protocol Version 4.

- Then attempt WebSocket upgrade:

GET /socket.io/?EIO=4&transport=websocket

Upgrade: websocket

Connection: Upgrade- If upgrade fails (proxy/firewall/policy constraints):

- Stay on polling mode.

- App still works, but with higher overhead/latency.

HTTP LONG POLLING CYCLE (GET HOLD -> RESPONSE -> NEXT GET)

Long polling không giữ một duplex channel cố định như WebSocket; nó tạo chuỗi request/response lặp để mô phỏng realtime.

2.3 Wire payload: why raw WebSocket servers cannot directly parse Socket.IO events

If you emit:

socket.emit("chat", { msg: "hello" });Wire payload looks like Socket.IO protocol packets, e.g.:

42["chat",{"msg":"hello"}]Where:

4: message packet type.2: event packet type.- remainder: JSON (JavaScript Object Notation) event payload.

This is not arbitrary raw WebSocket app payload, so a raw WebSocket (WS) server cannot interpret it unless it implements compatible Socket.IO packet parsing semantics.

SOCKET.IO PACKET FLOW (EMIT -> ENCODE -> TRANSPORT -> HANDLE)

3. Core difference: Socket.IO != WebSocket

Socket.IO is not just a thin wrapper around WebSocket.

Architecture differences:

- WebSocket: standard low-level protocol.

- Socket.IO: realtime framework + custom protocol on top of Engine.IO.

- A raw WebSocket server cannot directly talk to a Socket.IO client without protocol compatibility.

In short: Socket.IO adds an application-level protocol for DX (Developer Experience)/reliability, trading off extra protocol overhead.

4. Quick comparison

| Criteria | WebSocket | Socket.IO |

|---|---|---|

| Type | Protocol | Library + protocol |

| Standard | RFC | Custom |

| Transport | WebSocket only | WebSocket (WS) + polling fallback |

| Reconnect | Manual | Built-in |

| Event system | No built-in API | emit/on |

| Rooms / namespaces | Not built-in | Built-in |

| Overhead | Lower | Higher |

5. Simple examples

5.1 Raw WebSocket

const ws = new WebSocket("wss://example.com");

ws.onmessage = (event) => {

console.log(event.data);

};

ws.onopen = () => {

ws.send("hello");

};Characteristics: low-level; you implement reconnect, retry, heartbeat, and backpressure.

5.2 Socket.IO

import { io } from "socket.io-client";

const socket = io("https://example.com", {

transports: ["websocket", "polling"],

});

socket.on("message", (data) => {

console.log(data);

});

socket.emit("message", { hello: "world" });Characteristics: built-in event abstraction, reconnect behavior, and room/namespace model.

5.3 Rooms / namespaces for practical fan-out control

In production this is more than convenience:

- Reduce fan-out by targeting only relevant groups.

- Apply authorization boundaries per domain (

project:42,org:abc). - Isolate tenant/module traffic to reduce cross-noise and overload.

SOCKET.IO ROOMS / NAMESPACES BROADCAST MODEL

Socket Server

Event phát vào room chỉ broadcast tới socket thuộc room đó, giúp giảm fan-out và kiểm soát quyền theo ngữ cảnh nghiệp vụ.

6. Auto-reconnect in production: recover safely, avoid reconnect storms

Reconnect is a reliability strategy, not only a UX (User Experience) convenience.

Recommended policy:

- Exponential backoff + jitter.

- Retry cap + degraded mode.

- Different handling for auth failures (

401/403) vs transient network failures. - Stream resume with

lastEventIdor sequence offset. - Add thundering herd safeguards with randomized jitter and per-client retry limits.

Pseudo:

let attempts = 0;

function nextDelayMs() {

const base = Math.min(30000, 500 * 2 ** attempts);

const jitter = Math.floor(Math.random() * 400);

return base + jitter;

}

async function reconnect() {

attempts += 1;

await sleep(nextDelayMs());

connect({ lastEventId });

}After reconnect succeeds:

- Re-authenticate and re-subscribe channels.

- Replay bounded history only.

- Re-evaluate authorization against current server state.

- Verify sequence gaps before accepting stream as healthy.

RECONNECT + BACKPRESSURE + BATCH CONTROL

Không reconnect dồn dập để tránh reconnect storm.

Khi queue vượt ngưỡng, cần throttle hoặc degrade non-critical events.

Giảm số lần emit giúp ổn định CPU/network và hạ p99 latency.

AUTH-AWARE RECONNECT FLOW

Nếu reconnect nhận 401/403, không retry mù quáng; cần refresh token hoặc yêu cầu login lại.

Sau khi auth hợp lệ, re-subscribe room/channel và resume từ last acknowledged offset.

7. Real-world WebSocket/Socket.IO problems

7.1 Socket congestion during burst traffic

Symptoms:

- Rapid queue growth.

- Latency spikes.

- Memory inflation from pending buffers.

Mitigation:

- Batch updates in 20-100ms windows.

- Throttle non-critical streams (typing/presence).

- Coalesce updates to latest state snapshots.

const buffer = [];

setInterval(() => {

if (buffer.length === 0) return;

io.to(roomId).emit("updates:batch", buffer.splice(0, buffer.length));

}, 50);7.2 Duplicates and ordering

With retry/reconnect, duplicates are expected.

Approach:

- Attach

eventId. - Use short-lived dedup cache at consumers.

- Use stream-level sequence number when strict order is required.

- Keep handlers idempotency-safe by design.

function onEvent(event: { eventId: string; seq: number; payload: unknown }) {

if (dedupCache.has(event.eventId)) return;

if (event.seq > lastSeq + 1) requestReplay(lastSeq + 1, event.seq - 1);

applyEvent(event.payload);

dedupCache.add(event.eventId);

lastSeq = Math.max(lastSeq, event.seq);

}ORDERING + DEDUP + REPLAY WINDOW

Stream có thể bị duplicate hoặc lệch thứ tự sau retry/reconnect.

7.3 Backpressure

When producers outpace consumers, define explicit policy:

- Drop oldest (telemetry-like streams).

- Drop newest (queue stability first).

- Temporarily pause or slow producers.

- Split critical vs non-critical channels.

This is often where production systems fail first: not at normal load, but during spikes without a clear backpressure strategy.

7.4 Auth/session mismatch

Common cases:

- Token expires while socket is still open.

- User logs out elsewhere but stale socket remains.

- Permission changes while stale room membership persists.

Recommendations:

- Re-validate auth on reconnect.

- Validate auth on critical actions.

- Actively disconnect sockets that no longer have permission.

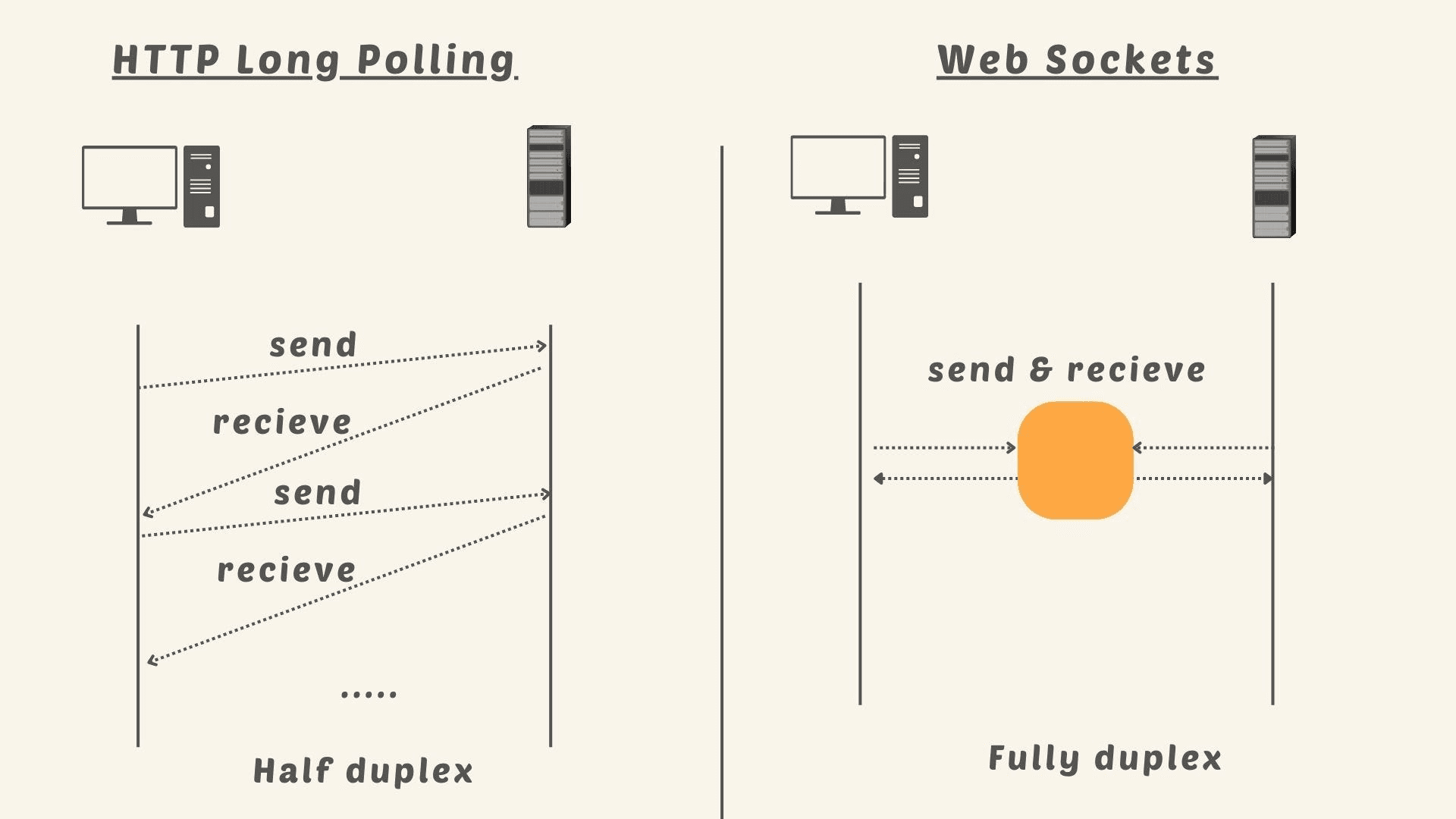

8. Polling vs SSE (Server-Sent Events) vs WebSocket

Polling

- Client periodically requests updates.

- Easy to implement.

- High overhead for tight realtime requirements.

SSE

- One-way server -> client stream over HTTP.

- Great for feed/progress/log push.

- Not natural for duplex interaction.

WebSocket

- Bidirectional with low latency.

- Most flexible for interactive realtime.

- Needs stronger operational discipline.

Decision guide:

- One-way push: SSE.

- Duplex + full control: raw WebSocket.

- Duplex + fast Node.js delivery: Socket.IO.

POLLING vs SSE vs WEBSOCKET (FLOW SHAPES)

Polling

Nhiều request rời rạc theo chu kỳ.

SSE

Push một chiều từ server về client.

WebSocket

Kênh hai chiều liên tục, latency thấp.

9. When to pick WebSocket vs Socket.IO

Pick WebSocket when:

- You need high performance and low overhead.

- You want full protocol control.

- You run custom multi-language backends (Go, Rust, Java, C++).

Pick Socket.IO when:

- You need faster implementation in Node.js ecosystem.

- You want built-in reconnect, rooms, and event middleware.

- You accept abstraction/protocol overhead for delivery speed.

Practical rule:

- Small team + fast delivery: Socket.IO.

- Extreme scale + deep protocol control: raw WebSocket.

Conclusion

WebSocket is the core realtime protocol. Socket.IO is a powerful implementation layer on top.

Understanding this relationship helps you:

- Choose the right mechanism for each use case.

- Design robust production policies (reconnect, dedup, backpressure).

- Avoid architecture mistakes when scaling realtime systems.